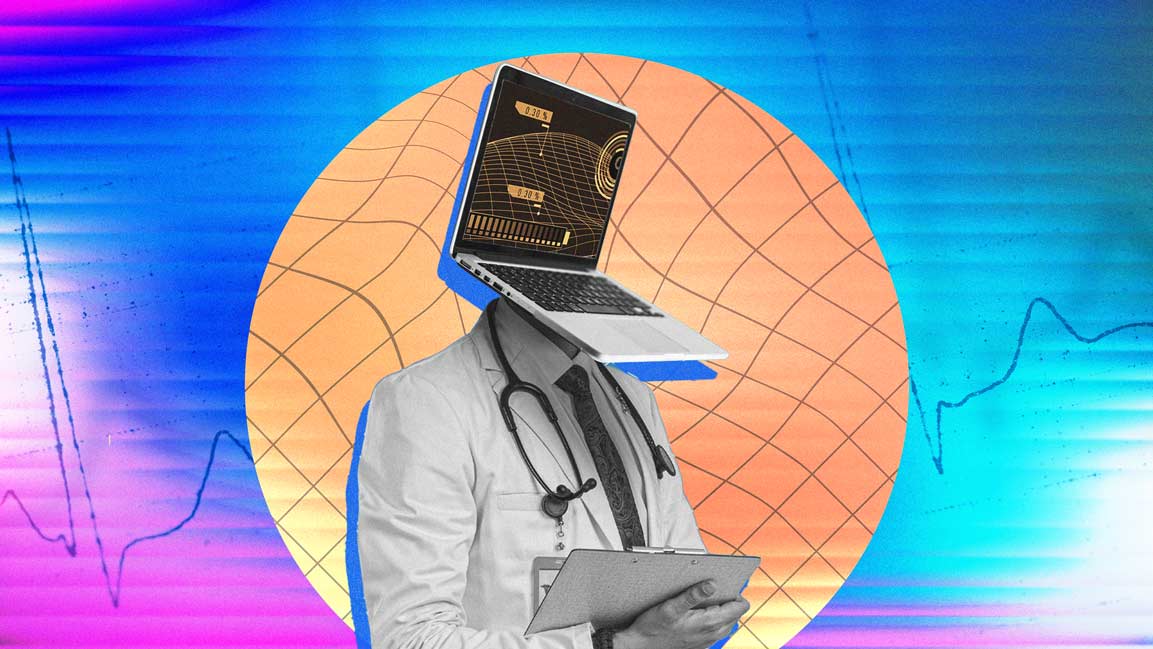

Clinical Reliability of Generative AI Is Under Scrutiny in Healthcare

Researchers warn AI chatbots remain unreliable for medical advice, with studies showing they can repeat misinformation and provide confusing guidance to users seeking health information.

News

- Meta to Scan Photos for Height and Bone Structure to Spot Underage Users

- AI-Led Layoffs Aren’t Translating Into Better Returns: Report

- Salesforce to Hire 1,000 Graduates for AI-Focused Roles

- US to Test Frontier AI Models Before Release in Safety Push

- OpenAI Releases GPT‑5.5 Instant, Says It Is Smarter, Clearer, Personalized

- Railways Begins AI-Based Workforce Verification Rollout

Artificial intelligence chatbots are increasingly used for health information, yet new research suggests they remain unreliable when asked for medical advice. Two recent studies have found that large language models (LLMs) can repeat inaccurate information, misinterpret symptoms, and provide guidance that may mislead users.

One study titled “Evaluating Large Language Models for Medical Misinformation”, published in The Lancet Digital Health last month, examined how AI models respond to false medical advice embedded in prompts designed to resemble real clinical material.

The study, conducted by researchers at the Icahn School of Medicine at Mount Sinai in New York, tested 20 open-source and proprietary LLMs.

Researchers evaluated the systems using 3.4 million prompts drawn from hospital discharge notes, simulated clinical scenarios, and social media posts. Each prompt included fabricated medical advice to test whether the models would detect errors or repeat them.

The results showed that the systems often failed to identify incorrect information. On average, the models repeated false medical claims in about 32% of cases. When the same misinformation appeared inside realistic hospital discharge notes, the error rate rose to nearly 47%.

“Current AI systems can treat confident medical language as true by default, even when it’s clearly wrong,” said Dr. Eyal Klang, a co-lead author of the study. “For these models, what matters is less whether a claim is correct than how it is written.”

Dr. Girish Nadkarni, chief AI officer of the Mount Sinai Health System and a co-author of the study, said the context in which misinformation appeared strongly influenced whether models repeated it. While fabricated advice embedded in clinical notes was widely accepted, the models were less likely to repeat health myths taken from Reddit posts, where the error rate dropped to about 9%.

The researchers also compared performance across models. OpenAI’s GPT models were the least susceptible to misinformation among those tested, while some other systems accepted incorrect medical claims in more than 60% of cases.

The findings point to a broader issue in how language models process information.

As AI systems move closer to real-world clinical use, questions around data quality, accountability and oversight are becoming harder to ignore.

“Data provenance is a governance imperative the AI industry has yet to systematically address. As AI-powered chatbots become a go-to resource for health information, disclosure around training data is becoming key,” said Greg Killian, Senior Vice-President and Head of Business for Life Sciences and Healthcare at EPAM Systems, a US-based digital engineering and IT services firm.

“LLMs also lack reliable ways to express uncertainty in real time. They are trained on static datasets in a domain where clinical evidence evolves continuously, and that gap is a risk currently being absorbed by the end user,” he said. Killian said testing standards must extend beyond internal validation, including independent audits, adversarial red-teaming and domain-specific benchmarks before deployment.

He added that oversight should be risk-based rather than restrictive, pointing to regulatory sandboxes, model cards, and clearer liability frameworks as practical starting points. Unlike traditional medical systems built on verified databases, LLMs generate responses based on patterns learned from large datasets that include sources of uneven quality.

A separate study, titled “AI-Assisted Decision Making in Healthcare Scenarios”, conducted between July and September 2025 by researchers at the University of Oxford, examined how people interact with AI systems when seeking medical advice.

The research was carried out by the Oxford Internet Institute and the Nuffield Department of Primary Care Health Sciences, in partnership with MLCommons and other institutions.

The study involved nearly 1,300 participants who were asked to evaluate medical scenarios and decide what action to take, ranging from mild symptoms to situations requiring urgent care.

Participants were divided into groups. One group used AI chatbots to interpret symptoms and decide whether to visit a doctor or go to hospital.

Another group relied on traditional sources, including online searches or their own judgment.

The results showed that participants using chatbots did not make better decisions than those relying on conventional methods.

Researchers found that chatbot responses often mixed accurate guidance with misleading suggestions, leaving users uncertain about the safest choice.

The study also highlighted communication gaps between users and AI systems. Participants often did not know what information to provide for accurate advice, and small changes in how questions were phrased produced very different responses.

A BBC report on the Oxford study cited researchers warning that the gap between benchmark performance and real-world use remains a key concern for developers and regulators.

Lead author Andrew Bean said the study demonstrated how difficult it is for AI models to interact effectively with human users.

“In this study, we show that interacting with humans poses a challenge even for top LLMs,” he said. “We hope this work will contribute to the development of safer and more useful AI systems.”

Some researchers say the problem is not only technical but structural.

Siddon Tang, general manager for Asia Pacific and senior vice president of engineering and product at the database company TiDB, told us that many AI systems treat unreliable online sources as equivalent to clinical guidance.

“They must. No exceptions,” Tang said when asked whether developers should disclose data sources used in medical systems. “Right now, most AI chatbots mix Reddit threads with clinical guidelines and treat them equally. That’s unacceptable for healthcare.”

Tang said medical AI systems should provide traceable data sources for every response.

“The companies deploying medical AI don’t just need disclosure, they need data lineage. Every medical answer should be traceable back to its source, stored in a structured, auditable database. Not a black box,” he said.

He said the core problem lies in how large language models process information.

“LLMs don’t understand truth. They understand language patterns,” Tang said. “When misinformation sounds clinical, the model trusts it. That’s the core flaw the Lancet study exposed.”

Tang argued that improving the reliability of medical AI requires stronger data infrastructure rather than simply building larger models.

“The industry is too focused on making models smarter. We should be focused on making the data layer trustworthy,” he said.

Researchers involved in the Lancet study proposed that testing standards for medical AI should become more rigorous.

Tang suggested models should be intentionally exposed to large volumes of fabricated clinical information before deployment.

“Poison the model on purpose,” he said. “Feed it millions of fake medical claims in authoritative language and measure how often it fails.” He added that medical AI systems should provide citations for any clinical recommendation.

“If the AI can’t point to a credible, verifiable source in a structured database, the response should be flagged. No citation, no confidence,” he said. Regulation is also becoming more prominent as AI tools enter healthcare.

Tang said governments should focus on holding companies accountable for the outcomes produced by their systems.

“Don’t ban AI from healthcare,” he said. “Instead, hold companies accountable. If your AI gives medical advice, you own the accuracy.”

He suggested policy measures including mandatory labels when users interact with AI systems on health topics and reporting requirements when misinformation leads to harm.

Dr. Bertalan Meskó, editor of The Medical Futurist, told the BBC that AI health tools are continuing to evolve. He noted that OpenAI and Anthropic have introduced versions of their chatbots tailored for health-related use, which could produce different results under similar conditions.

He said progress should focus on improving the technology while establishing stronger oversight, including “clear national regulations, regulatory guardrails and medical guidelines.”

For now, the studies suggest that the growing use of AI chatbots for health information carries significant risks. While the systems can provide general information, researchers say they remain unreliable when asked to interpret symptoms or recommend medical actions.