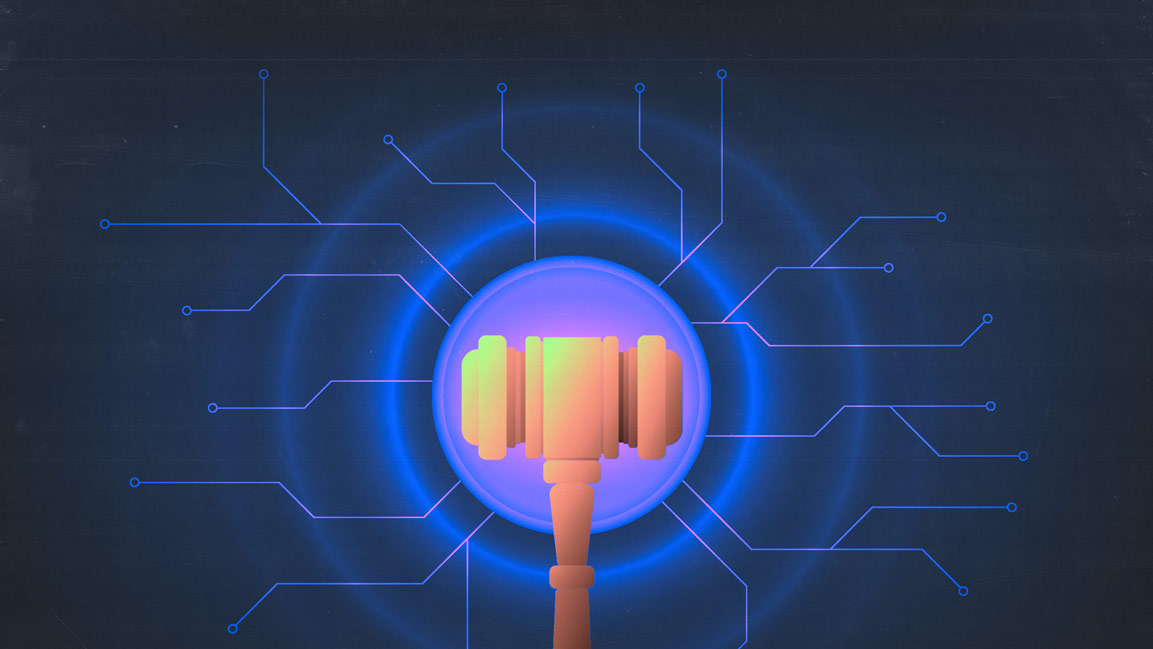

Pentagon AI Deal Sparks Clash Over Military Use of AI

Anthropic refused Pentagon demands tied to surveillance and autonomous weapons, prompting Washington to cut the company from defense work as OpenAI moved ahead with a classified deployment agreement.

News

- MIT Sloan Management Review Brings Its Global AI Research Forum to India

- Poonawalla Fincorp Launches AI Platform for Customer Service

- Google in Talks with Marvell to Develop AI Chips, Report Says

- Sequoia Raises $7 Billion for Bigger AI Bets

- Anthropic Releases Claude Opus 4.7 With Safeguards

- AI Dispatch | 10–16 April 2026

Backlash to OpenAI’s Pentagon partnership spilled into the consumer market over the weekend, sending users toward rival chatbot Claude and pushing the app to the top of Apple’s U.S. App Store.

US uninstalls of the ChatGPT mobile app jumped about 295% on Monday from the previous day, according to app analytics data.

The spike in user reaction followed an escalating confrontation between Anthropic and the Trump administration after President Donald Trump ordered federal agencies on 28 February to cease using Anthropic technology, a move that effectively cut the company off from US defense work.

The order quickly escalated into one of the most consequential confrontations yet between Silicon Valley and Washington over how artificial intelligence should be used in national security systems.

Within hours of the government’s move to cut off Anthropic from defense work, the Pentagon reached a separate agreement with OpenAI to deploy advanced AI systems in classified environments.

The rapid sequence of events has triggered legal threats, employee backlash and a broader debate across the technology industry over whether private AI companies should accept government demands related to surveillance and autonomous weapons.

From Contract Talks to Blacklisting

The core dispute centered on negotiations between Anthropic and the Pentagon over how Claude would be used within classified military systems.

Anthropic had been operating under a $200 million Department of War contract since June 2024 and was the first frontier AI company to deploy models on classified U.S. government networks.

According to Anthropic, the Pentagon demanded unfettered access to Claude for any lawful use.

Chief executive Dario Amodei insisted on maintaining two exceptions.

The first was a prohibition on mass domestic surveillance of Americans.

The second was a prohibition on the use of Claude to power fully autonomous weapons.

In a blog post explaining the company’s position, Amodei wrote, “I believe deeply in the existential importance of using AI to defend the US and other democracies, and to defeat our autocratic adversaries.”

He emphasized that Anthropic had actively supported national security efforts, writing that Claude is “extensively deployed across the Department of War and other national security agencies for mission critical applications, such as intelligence analysis, modeling and simulation, operational planning, cyber operations, and more.”

He also noted that the company had cut off access to firms linked to the Chinese Communist Party (CCP) and shut down CCP sponsored cyberattacks attempting to abuse Claude.

But he drew a firm line around two uses.

On domestic surveillance, he wrote, “Using these systems for mass domestic surveillance is incompatible with democratic values.”

He warned that AI makes it possible to assemble scattered public data into a comprehensive picture of a person’s life automatically and at massive scale.

On autonomous weapons, he said, “Today, frontier AI systems are simply not reliable enough to power fully autonomous weapons. We will not knowingly provide a product that puts America’s warfighters and civilians at risk.”

Anthropic said it offered to collaborate with the Department of War on research and development to improve reliability, but that proposal was not accepted.

The Pentagon, according to Anthropic, insisted it would contract only with AI companies that accede to any lawful use and remove safeguards in those two areas.

The Administration’s Response

On February 28, President Trump announced on Truth Social Post that federal agencies must immediately cease all use of Anthropic technology.

He accused the company of attempting to dictate how the military fights wars.

“The United States of America will never allow a radical left, woke company to dictate how our great military fights and wins wars,” Trump wrote. “The Leftwing nut jobs at Anthropic have made a disastrous mistake trying to strong arm the Department of War, and force them to obey their Terms of Service instead of our Constitution.”

Thirteen minutes after a Pentagon deadline passed, Defense Secretary Pete Hegseth formally designated Anthropic a supply chain risk to national security on X post.

The label prohibits any contractor or supplier that works with the military from conducting business with the company.

The designation has historically been applied to foreign adversaries, not American technology firms.

Anthropic said it would challenge the decision in court.

“Designating Anthropic as a supply chain risk would be an unprecedented action — one historically reserved for U.S. adversaries, never before publicly applied to an American company,” the company said.

“We believe this designation would both be legally unsound and set a dangerous precedent for any American company that negotiates with the government.”

Anthropic said it had negotiated in good faith and would ensure a smooth transition if removed from military systems. But it added that threats to invoke the Defense Production Act or label the company a security risk would not change its position.

“These latter two threats are inherently contradictory,” the company wrote. “One labels us a security risk; the other labels Claude as essential to national security.”

OpenAI Steps In

Within hours of the announcement, OpenAI said it had reached its own agreement with the Pentagon to deploy AI systems in classified environments.

In a blog post, OpenAI said, “Yesterday we reached an agreement with the Pentagon for deploying advanced AI systems in classified environments, which we requested they also make available to all AI companies.”

The company outlined three red lines. Its technology cannot be used for mass domestic surveillance, cannot be used to direct autonomous weapons systems, and cannot be used for high stakes automated decisions.

“We think our agreement has more guardrails than any previous agreement for classified AI deployments, including Anthropic’s,” OpenAI wrote.

Chief executive Sam Altman said the deal reflects key safety principles.

“Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems,” Altman wrote on X.

He added that the Pentagon agrees with these principles and that they are reflected in law, policy and the agreement.

After criticism that the company moved too quickly, Altman acknowledged missteps. “One thing I think I did wrong we shouldn’t have rushed to get this out on Friday.

The issues are super complex, and demand clear communication. We were genuinely trying to de escalate things and avoid a much worse outcome, but I think it just looked opportunistic and sloppy.”

Altman said OpenAI amended the deal to clarify limits on surveillance.

The agreement now states that the AI system shall not be intentionally used for domestic surveillance of U.S. persons and nationals and prohibits deliberate tracking or monitoring of Americans, including through commercially acquired data.

He also said the Department affirmed that OpenAI services will not be used by Department of War intelligence agencies such as the NSA without a follow-on contract modification.

In a separate post, Altman wrote, “The democratic process must stay in control, and we must democratize AI. OpenAI should not decide the fate of the world; no private company should.”

He added that if he received what he believed was an unconstitutional order, “of course I would rather go to jail than follow it.”

Industry Backlash and User Reaction

The confrontation has rippled across Silicon Valley. Nearly 500 OpenAI and Google employees signed an open letter stating, “We will not be divided.” The letter warned that the Pentagon was negotiating with multiple companies to secure concessions.

Consumers reacted as well. US uninstalls of the ChatGPT mobile app jumped 295% day over day after the OpenAI defense deal was announced. Meanwhile, downloads of Claude rose sharply following Anthropic’s refusal to remove its safeguards.

The episode highlights the deepening tension between national security imperatives and AI safety principles.

What remains unresolved is whether the courts will uphold the supply chain risk designation and how other AI firms will navigate similar demands in the future.